Business Process Model and Notation (BPMN) provides a standardized way to visualize workflows. However, visual clarity does not guarantee execution correctness. A common pitfall in process modeling is the creation of a deadlock. This occurs when a process instance reaches a state where no further progress is possible, yet the workflow has not completed. Understanding the mechanics of flow control, gateways, and synchronization is essential for building robust systems.

🧠 Understanding the Process Deadlock

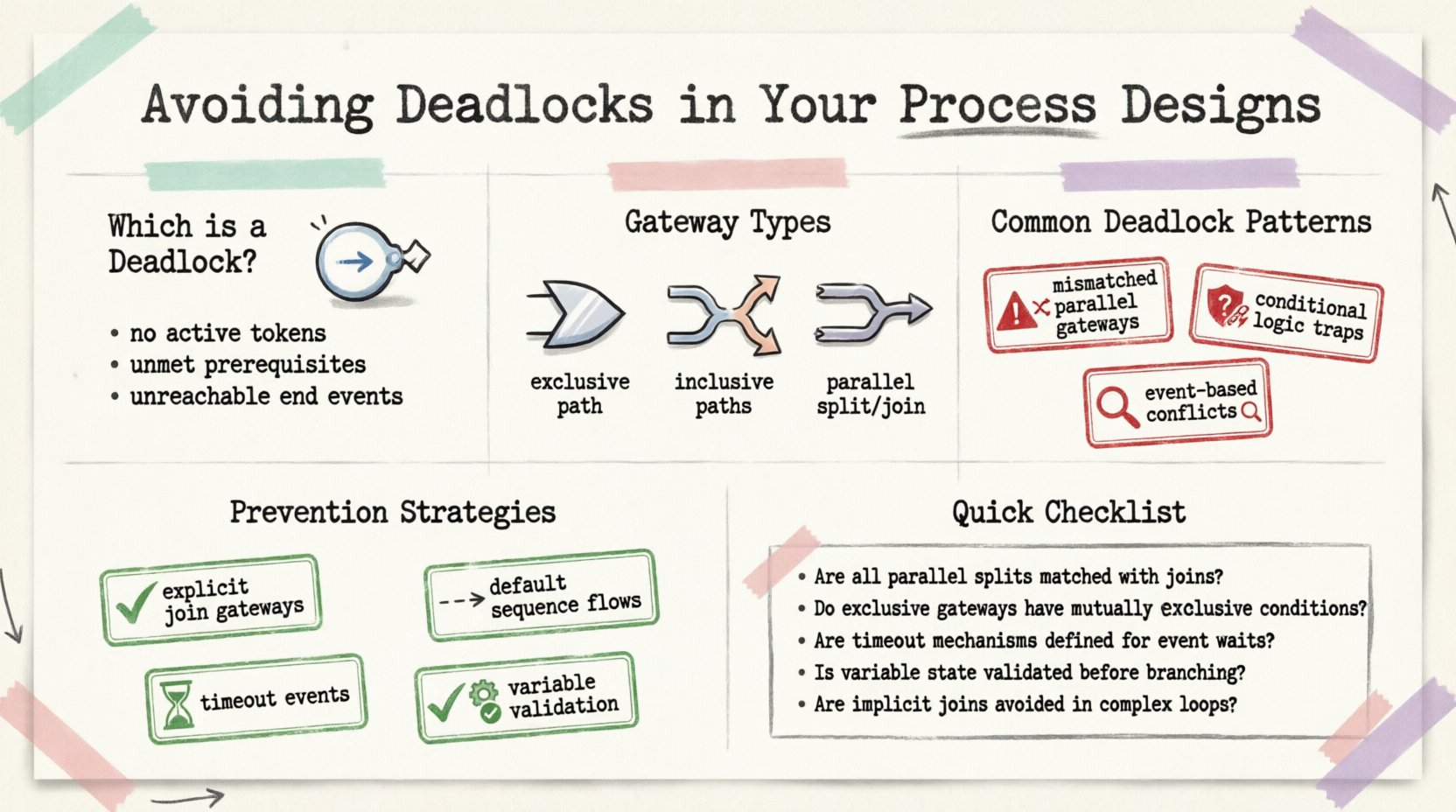

A deadlock in a BPMN diagram represents a state where tokens are stuck. In the execution engine, tokens represent the flow of control through the process. When a token enters a region of the diagram and cannot move forward due to missing conditions or unmet dependencies, the process halts indefinitely. This is often referred to as a livelock or a blocking state.

Why does this matter? A halted process impacts operational efficiency and user experience. If a customer order gets stuck in a payment verification loop, revenue is delayed. If an employee onboarding workflow freezes, hiring timelines suffer. Preventing these states requires a deep understanding of how the engine interprets sequence flows.

Key Characteristics of Deadlocks

- No Active Tokens: The process engine stops waiting for input that will never arrive.

- Unmet Prerequisites: A gateway requires tokens from multiple paths, but one path is blocked.

- Unreachable End Events: The process cannot reach its termination point.

- State Consistency: Variables required for conditional logic are undefined or null.

🚦 The Mechanics of Gateways

Gateways are the decision points in BPMN. They control how tokens flow through the diagram. Misconfiguring these elements is the leading cause of deadlocks. There are three primary types of gateways relevant to flow synchronization:

- XOR Gateway: Exclusive choice. Only one outgoing path is taken based on conditions.

- OR Gateway: Inclusive choice. One or more outgoing paths can be taken.

- AND Gateway: Parallel split and join. All outgoing paths must complete before proceeding.

Deadlocks frequently occur at AND Gateways when splitting and joining logic is mismatched. For example, if a parallel split creates two paths, both must arrive at a subsequent join gateway to release the token. If one path ends prematurely or loops back incorrectly, the token waits forever.

⚠️ Common Patterns Causing Deadlocks

Identifying risky patterns helps modelers correct designs before deployment. The following scenarios are frequent sources of blocking states.

1. Mismatched Parallel Gateways

When you split a flow into parallel tasks using an AND Gateway, you create multiple tokens. To join these back into a single flow, you need a corresponding AND Gateway. If you use an XOR Gateway to join parallel paths, the engine expects only one token to arrive. If the other token is still processing, the XOR gateway waits indefinitely, causing a deadlock.

2. Conditional Logic Traps

Conditional expressions on outgoing sequence flows determine which path is taken. If the conditions on all outgoing paths evaluate to false, the token has nowhere to go. For example, if a path checks for status == 'approved' or status == 'rejected', but status == 'pending', the process halts.

3. Event-Based Gateway Conflicts

Event-based gateways allow the process to wait for a specific event before proceeding. If multiple events are configured, the first one to occur triggers the path. However, if the events are mutually exclusive and none occur within a reasonable timeframe, the process waits. Without a timeout mechanism, this is a deadlock.

📊 Gateway Behavior Comparison

Understanding the specific behavior of gateways is crucial for avoiding synchronization errors. The table below outlines how different gateways handle incoming and outgoing tokens.

| Gateway Type | Split Behavior (Out) | Join Behavior (In) | Deadlock Risk |

|---|---|---|---|

| AND (Parallel) | Creates tokens for all paths | Requires all tokens to arrive | High if paths are unbalanced |

| XOR (Exclusive) | Creates one token for one path | Accepts one token | Medium if conditions fail |

| OR (Inclusive) | Creates tokens for matching paths | Requires all active paths to arrive | High if active paths are not tracked |

| Event-Based | Waits for event occurrence | Triggers on first event | High without timeouts |

🛡️ Prevention Strategies

Once you understand the mechanics, you can apply specific strategies to prevent deadlocks. These techniques focus on ensuring that every path has a clear exit and that synchronization is explicit.

1. Explicit Join Gateways

Always ensure that every split has a corresponding join. If you split a flow into two parallel tasks, verify that both tasks converge at an AND Gateway before continuing. Do not allow parallel paths to merge directly without a gateway, unless the engine supports implicit joins (which is rare).

2. Default Sequence Flows

Use default sequence flows on XOR gateways. A default flow is the path taken if no other condition is met. This acts as a safety net. If a token reaches a gateway and none of the specific conditions are true, it follows the default path. This prevents the token from disappearing into a void.

3. Timeout Events

For processes waiting on external inputs, implement timer events. If a user does not respond to a task within a set time, the process should move to an alternative path (e.g., escalation or auto-cancellation). This prevents the process from waiting forever.

4. Variable Validation

Ensure that all variables used in conditional expressions are initialized before the gateway. A null value can cause a condition to evaluate incorrectly. Implement logic to set default values at the start of the process or at the point of data creation.

🔍 Debugging and Testing

Even with careful design, deadlocks can occur due to complex runtime conditions. Testing is the final line of defense. Follow these steps to validate your process models.

- Trace Token Flow: Use simulation tools to watch tokens move through the diagram. Look for tokens that stop moving unexpectedly.

- Check Event Subprocesses: Ensure that interrupting events do not cancel the main flow while other parallel tasks are still running.

- Review Error Handling: Verify that error boundaries are attached to tasks that might fail. If a task fails and there is no boundary, the token is lost.

- Validate Data Context: Ensure that data passed between tasks is sufficient to satisfy downstream conditions.

Common Pitfalls Checklist

- Did every AND Gateway have a corresponding split?

- Are all XOR gateways using default flows?

- Are event subprocesses interrupting the correct flow?

- Is there a timeout for external waits?

- Are variable names consistent across the diagram?

🔄 Advanced Scenarios: Message Flows

When processes involve external systems, message flows introduce additional complexity. Unlike sequence flows, message flows represent communication between pools or participants. A deadlock can occur if a message is sent but never received, or if the receiving process expects a message that is never triggered.

To mitigate this:

- Use Intermediate Message Events: These clearly mark where the process waits for a response.

- Implement Compensation: If a message transaction fails, define a compensation activity to reverse previous actions.

- Correlation Keys: Ensure that message correlation keys are unique and correctly mapped to process variables.

📝 Final Summary

Designing a BPMN model that avoids deadlocks requires attention to detail regarding gateways, tokens, and data flow. By understanding the synchronization requirements of AND gateways and ensuring that conditional logic covers all possible states, you can create resilient processes. Regular testing and simulation are vital to catch issues before they impact production environments. Focus on explicit synchronization and default paths to maintain control over the process lifecycle.

Adopting these practices ensures that your process designs remain efficient and reliable. Continuous review of process logs can also help identify potential deadlocks that arise from real-world data variations not present during initial modeling.